We’ve run over 100 technical audits this 12 months.

By means of this we’ve gained deep insights into how technical construction impacts an internet site’s efficiency in search.

This text will spotlight the most typical technical Search engine optimisation points we encounter and which have the largest impact on organic traffic when corrected.

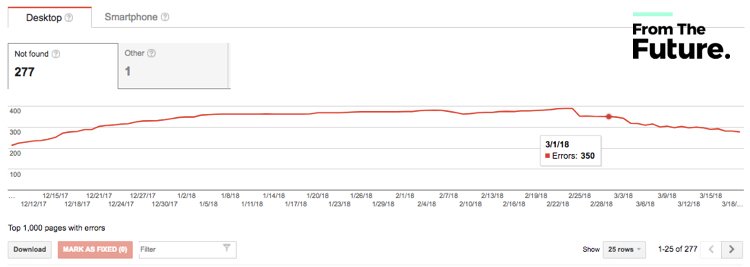

1. Mismanaged 404 errors

This occurs fairly a bit on eCommerce websites. When a product is eliminated or expires, it’s simply forgotten and the web page “404s”.

Though 404 errors can erode your crawl finances, they gained’t essentially kill your Search engine optimisation. Google understands that generally you HAVE to delete pages in your website.

Nevertheless, 404 pages is usually a drawback after they:

- Are getting visitors (internally and from natural search)

- Have exterior hyperlinks pointing to them

- Have inside hyperlinks pointing to them

- Have numerous them on a bigger web site

- Are shared on social media / across the internet

The most effective follow is to arrange a 301 redirect from the deleted web page into one other related web page in your website. This may protect the Search engine optimisation fairness and ensure customers can seamlessly navigate.

discover these errors

- Run a full web site crawl (SiteBulb, DeepCrawl or Screaming Frog) to seek out all 404 pages

- Examine Google Search Console reporting (Crawl > Crawl Errors)

repair these errors

- Analyze the listing of “404” errors in your web site

- Crosscheck these URLs with Google Analytics to know which pages had been getting visitors

- Crosscheck these URLs with Google Search Console to know which pages had inbound hyperlinks from outdoors web sites

- For these pages of worth, establish an current web page in your web site that’s most related to the deleted web page

- Setup “server-side” 301 redirects from the 404 web page into the prevailing web page you’ve recognized – If you’ll use a 4XX web page – be sure that web page is definitely practical so it doesn’t affect person expertise

2. Web site migrations points

When launching a brand new web site, design modifications or new pages, there are a variety of technical points that ought to be addressed forward of time.

Frequent errors we see:

- Use of 302 (non permanent redirect) as a substitute of 301 (everlasting) redirects. Whereas Google recently stated that 302 redirects cross Search engine optimisation fairness, we hedge primarily based on inside knowledge that reveals 301 redirects are the higher choice

- Improper setup of HTTPS on an internet site. Particularly, not redirecting the HTTP model of the positioning into HTTPS which may trigger points with duplicate pages

- Not carrying over 301 redirects from the earlier website to new website. This usually occurs in case you’re utilizing a plugin for 301 redirects – 301 redirects ought to at all times be setup by means of an internet site’s cPanel

- Leaving legacy tags on the positioning from the staging area. For instance, canonical tags, NOINDEX tags, and so on. that forestall pages in your staging area from being listed

- Leaving staging domains listed. The alternative of the earlier merchandise, while you do NOT place the right tags on staging domains (or subdomains) to NOINDEX them from SERPs (both with NOINDEX tags or blocking crawl by way of Robots.txt file)

- Creating “redirect chains” when cleansing up legacy web sites. In different phrases, not correctly figuring out pages that had been beforehand redirected and shifting ahead with a brand new set of redirects

- Not saving the power www or non www of the positioning within the .htaccess file. This causes 2 (or extra) situations of your web site to be listed in Google, inflicting points with duplicate pages being listed

discover these errors

- Run a full web site crawl (SiteBulb, DeepCrawl or Screaming Frog) to get the wanted knowledge inputs

repair these errors

- Triple test to verify your 301 redirects migrated correctly

- Take a look at your 301 and 302 redirects to verify they go to the best place on step one

- Examine canonical tags in the identical manner and guarantee you could have the best canonical tags in place

- Given a selection between canonicalizing a web page and 301 redirecting a web page – a 301 redirect is a safer, stronger choice

- Examine your code to make sure you take away NOINDEX tags (if used on staging area). Don’t simply uncheck the choices the plugins. Your developer might have hardcoded NOINDEX into the theme header – Look > Themes > Editor > header.php

- Replace your robots.txt file

- Examine and replace your .htaccess file

3. Web site pace

Google has confirmed that website speed is a rating issue – they anticipate pages to load in 2 seconds or much less. Extra importantly, web site guests gained’t wait round for a web page to load.

In different phrases, gradual web sites don’t generate profits.

Optimizing for web site pace would require the assistance of a developer, as the commonest points slowing down web sites are:

- Massive, unoptimized pictures

- Poorly written (bloated) web site code

- Too many plugins

- Heavy Javascript and CSS

discover these errors

repair these errors

- Rent a developer with expertise on this space (find out how FTF can help)

- Be sure to have a staging area setup so web site efficiency isn’t hindered

- The place doable ensure you have upgraded PHP to PHP7 the place you employ WordPress or a PHP CMS. This may have a big effect on pace.

4. Not optimizing the cell Consumer Expertise (UX)

Google’s index is officially mobile first, which implies that the algorithm is wanting on the cell model of your website first when rating for queries.

With that being stated, don’t exclude the desktop expertise (UX) or simplify the cell expertise considerably in comparison with the desktop.

Google has stated it needs each experiences to be the identical. Google has also stated that these utilizing responsive or dynamically served pages shouldn’t be affected when the change comes.

discover these errors

- Use Google’s Mobile-Friendly Test to test if Google sees your website as mobile-friendly

- Examine to see if “smartphone Googlebot” is crawling your website – it hasn’t rolled out in every single place but

- Does your web site reply to completely different units? In case your website doesn’t work on a cell system, now could be the time to get that fastened

- Obtained unusable content material in your website? Examine to see if it hundreds or in case you get error messages. Be sure to totally take a look at all of your website pages on cell

repair these errors

- Perceive the affect of cell in your server load

- Give attention to constructing your pages from a mobile-first perspective. Google likes Responsive websites and is their preferred option for delivering mobile sites. In case you at the moment run a standalone subdirectory, m.yourdomain.com have a look at potential affect of elevated crawling in your server

- If it’s essential to, take into account a template replace to make the theme responsive. Simply utilizing a plugin won’t do what you want or trigger different points. Find a developer who can scratch construct responsive themes

- Give attention to a number of cell breakpoints, not simply your model new iPhone X. 320px extensive (iPhone 5 and SE) remains to be tremendous essential

- Take a look at throughout iPhone and Android

- When you have content material that wants “fixing” – flash or different proprietary programs that don’t work in your cell journey – take into account shifting to HTML5 which is able to render on cell – Google web designer will assist you to reproduce FLASH information in HTML

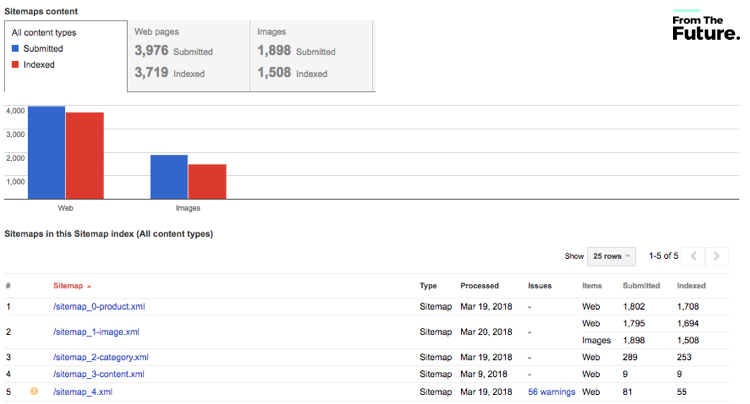

5. XML Sitemap points

An XML Sitemap lists out URLs in your website that you simply wish to be crawled and listed by search engines like google. You’re allowed to incorporate details about when a web page:

- Was final up to date

- How usually it modifications

- How essential it’s in relation to different URLs within the website (i.e., precedence)

Whereas Google admittedly ignores loads of this info, it’s nonetheless essential to optimize correctly, notably on massive web sites with sophisticated architectures.

Sitemaps are notably useful on web sites the place:

- Some areas of the web site should not obtainable by means of the browsable interface

- Site owners use wealthy Ajax, Silverlight or Flash content material that’s not usually processed by search engines like google

- The location may be very massive, and there’s a probability for the net crawlers to miss among the new or lately up to date content material

- When web sites have an enormous variety of pages which might be remoted or not properly linked collectively

- Misused “crawl finances” on unimportant pages. If that is so, you’ll wish to block crawl / NOINDEX

discover these errors

- Be sure to have submitted your sitemap to your GSC

- Additionally keep in mind to make use of Bing webmaster tools to submit your sitemap

- Examine your sitemap for errors Crawl > Sitemaps > Sitemap Errors

- Examine the log information to see when your sitemap was final accessed by bots

repair these errors

- Be certain your XML sitemap is related to your Google Search Console

- Run a server log evaluation to know how usually Google is crawling your sitemap. There are many different issues we’ll cowl utilizing our server log information afterward

- Google will present you the problems and examples of what it sees as an error so you possibly can appropriate

- In case you are utilizing a plugin for sitemap technology, be sure that it’s updated and that the file it generates works by validating it

- In case you don’t wish to use Excel to test your server logs – you should use a server log analytics device resembling Logz.io, Greylog, SEOlyzer (nice for WP websites) or Loggly to see how your XML sitemap is getting used

6. URL Construction points

As your web site grows, it’s straightforward to lose observe of URL constructions and hierarchies. Poor constructions make it troublesome for each customers and bots navigate, which is able to negatively affect your rankings.

- Points with web site construction and hierarchy

- Not utilizing correct folder and subfolder construction

- URLs with particular characters, capital letters or not helpful to people

discover these errors

- 404 errors, 302 redirects, points along with your XML sitemap are all indicators of a website that wants its construction revisited

- Run a full web site crawl (SiteBulb, DeepCrawl or ScreamingFrog) and manually overview for high quality points

- Examine Google Search Console reporting (Crawl > Crawl Errors)

- Consumer testing – ask individuals to seek out content material in your website or make a take a look at buy – use a UX testing service to document their experiences

repair these errors

- Plan your website hierarchy – we at all times advocate parent-child folder constructions

- Be certain all content material is positioned in its appropriate folder or subfolder

- Be certain your URL paths are straightforward to learn and make sense

- Take away or consolidate any content material that appears to rank for a similar key phrase

- Attempt to restrict the variety of subfolders / directories to not more than three ranges

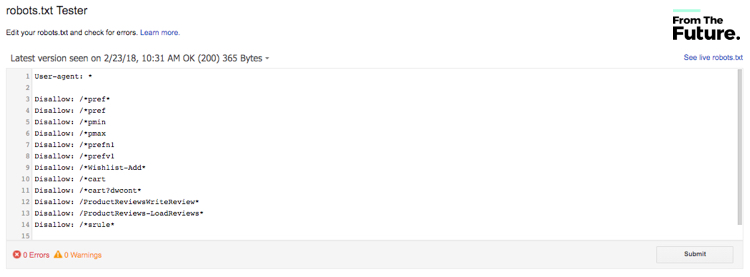

7. Points with robots.txt file

A Robots.txt file controls how search engines like google entry your web site. It’s a generally misunderstood file that may crush your web site’s indexation if misused.

Most issues with the robots.txt are inclined to come up from not altering it while you transfer out of your improvement atmosphere to dwell or miscoding the syntax.

discover these errors

- Examine your website stats – i.e. Google Analytics for giant drops in visitors

- Examine Google Search Console reporting (Crawl > robots.txt tester)

repair these errors

- Examine Google Search Console reporting (Crawl > robots.txt tester) This may validate your file

- Examine to verify the pages/folders you DON’T wish to be crawled are included in your robots.txt file

- Be sure to should not blocking any essential directories (JS, CSS, 404, and so on.)

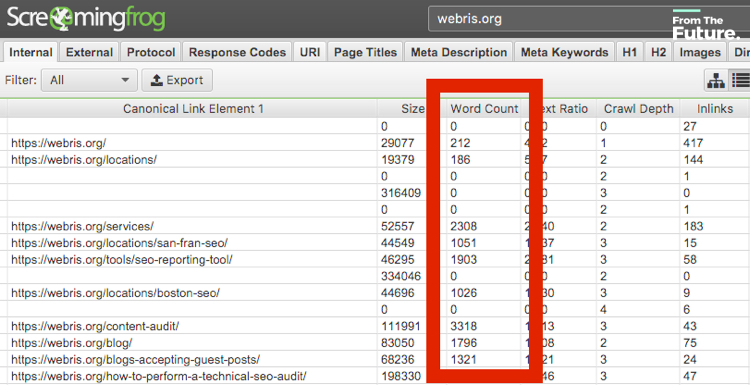

8. An excessive amount of skinny content material

It’s not a good suggestion to crank out pages for “Search engine optimisation” functions. Google needs to rank pages which might be deep, informative and supply worth.

Having an excessive amount of “skinny” (i.e. lower than 500 phrases, no media, lack of goal) can negatively affect your Search engine optimisation. A number of the causes:

- Content material that doesn’t resonate along with your audience will kill conversion and engagement charges

Google’s algorithm appears to be like closely at content material high quality, belief and relevancy (aka having crap content material can damage rankings) - An excessive amount of low high quality content material can lower search engine crawl charge, indexation charge and finally, visitors

- Relatively than producing content material round every key phrase, acquire the content material into frequent themes and write far more detailed, helpful content material.

discover these errors

- Run a crawl to seek out pages with phrase depend lower than 500

- Examine your GSC for guide messages from Google (GSC > Messages)

- Not rating for the key phrases you’re writing content material for or immediately lose rankings

- Examine your web page bounce charges and person dwell time – pages with excessive bounce charges

repair these errors

- Cluster keywords into themes so somewhat than writing one key phrase per web page you possibly can place 5 or 6 in the identical piece of content material and increase it.

- Work on pages that attempt to preserve the person engaged with quite a lot of content material – take into account video or audio, infographics or pictures – in case you don’t have these expertise discover them on Upwork, Fiverr, or PPH.

- Take into consideration your person first – what do they need? Create content material round their wants.

9. An excessive amount of irrelevant content material

Along with “skinny” pages, you wish to be sure that your content material is “related”. Irrelevant pages that don’t assist the person, may also detract from the great things you could have on website.

That is notably essential you probably have a small, much less authoritative web site. Google crawls smaller web site lower than extra authoritative ones. We wish to be sure that we’re solely serving Google our greatest content material to extend that belief, authority and crawl finances.

Some frequent situations

- Creating boring pages with low engagement

- Letting search engines like google crawl of “non-Search engine optimisation” pages

discover these errors

- Assessment at your content material technique. Give attention to creating higher pages versus extra

- Examine your Google crawl stats and see what pages are being crawled and listed

repair these errors

- Take away quotas in your content material planning. Add content material that provides worth somewhat than the six blogs posts you NEED to publish as a result of that’s what your plan says

- Add pages to your Robots.txt file that you’d somewhat not see Google rank. On this manner, you’re focussing Google on the great things

10. Misuse of canonical tags

A canonical tag (aka “rel=canonical”) is a chunk of HTML that helps search engines like google decipher duplicate pages. When you have two pages which might be the identical (or comparable), you should use this tag to inform search engines like google which web page you wish to present in search outcomes.

In case your web site runs on a CMS like WordPress or Shopify, you possibly can simply set canonical tags utilizing a plugin (we like Yoast).

We regularly discover web sites that misuse canonical tags, in plenty of methods:

- Canonical tags pointing to the mistaken pages (i.e.pages not related to the present web page)

- Canonical tags pointing to 404 pages (i.e., pages that not exist)

- Lacking a canonical tag altogether

- eCommerce and “faceted navigation“

- When a CMS create two variations of a web page

That is vital, as you’re telling search engines like google to deal with the mistaken pages in your web site. This could trigger huge indexation and rating points. The excellent news is, it’s a simple repair.

discover these errors

- Run a website crawl in DeepCrawl

- Examine “Canonical hyperlink aspect” to the foundation URL to see which pages are utilizing canonical tags to level to a unique web page

repair these errors

- Assessment pages to find out if canonical tags are pointing to the mistaken web page

- Additionally, it would be best to run a content audit to know pages which might be comparable and want a canonical tag

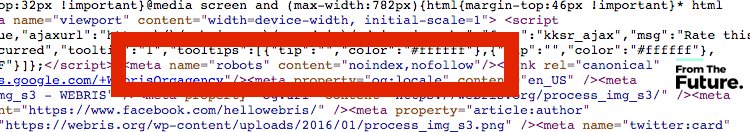

11. Misuse of robots tags

In addition to your robots.txt file, there are additionally robots tags that can be utilized in your header code. We see loads of potential points with this used at file stage and on particular person pages. In some instances, we’ve got seen a number of robots tags on the identical web page.

Google will wrestle with this and it could possibly forestall an excellent, optimized web page from rating.

discover these errors

- Examine your supply code in a browser to see if robots tag added greater than as soon as

- Examine the syntax and don’t confuse the nofollow hyperlink attribute with the nofollow robots tag

repair these errors

- Determine how you’ll handle/management robots exercise. Yoast Search engine optimisation offers you some fairly good skills to handle robotic tags at a web page stage

- Be sure to use one plugin to handle robotic exercise

- Be sure to amend any file templates the place robotic tags have been added manually Look > Themes >Editor > header.php

- You may add Nofollow directives to the robots.txt file as a substitute of going file by file

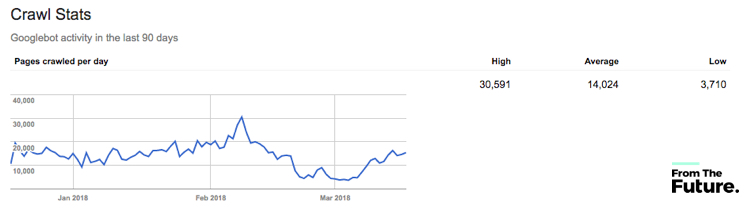

12. Mismanaged crawl finances

It’s a problem for Google to crawl all of the content material on the web. So as to save time, the Googlebot has a finances it allocates to websites relying on plenty of components.

A extra authoritative website could have an even bigger crawl finances (it crawls and indexes extra content material) than a decrease authority website, which could have fewer pages and fewer visits. Google itself defines this as “Prioritizing what to crawl, when and the way a lot useful resource the server internet hosting the positioning can allocate to crawling.”

discover these errors

- Discover out what your crawl stats are in GSC Search Console > Choose your area > Crawl > Crawl Stats

- Use your server logs to seek out out what the Googlebot is spending essentially the most time doing in your website – it will then let you know whether it is on the best pages – use a device resembling botify if spreadsheets make you nauseous.

repair these errors

- Scale back the errors in your website

- Block pages you don’t actually need Google crawling

- Scale back redirect chains by discovering all these hyperlinks that hyperlink to a web page that itself is redirected and replace all hyperlinks to the brand new last web page

- Fixing among the different points we’ve got mentioned above will go a good distance to assist enhance your crawl finances or focus your crawl finances on the best content material

- For ecommerce particularly, not blocking parameter tags which might be used for faceted navigation with out altering the precise content material on a web page

Check out our detailed guide on how to improve crawl budget

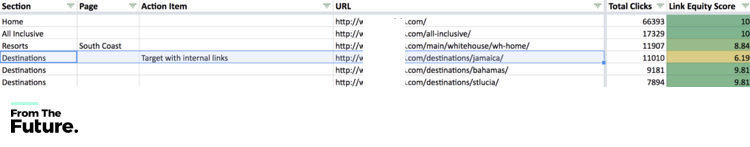

13. Not leveraging inside hyperlinks to cross fairness

Inner hyperlinks assist to distribute “fairness” throughout an internet site. A lot of websites, particularly these with skinny or irrelevant content material are inclined to have a decrease quantity of cross-linking inside the website content material.

Cross-linking articles and posts assist Google and your website visitors strikes round your web site. The added worth of this from a technical SEO perspective is that you could cross fairness throughout the web site. This helps with improved key phrase rating.

discover these errors

- For pages you are attempting to rank, have a look at what inside pages hyperlink to them. This may be finished in Google Analytics – have they got any inside inbound hyperlinks?

- Run an inlinks crawl utilizing Screaming Frog

- You’ll know your self in case you actively hyperlink to different pages in your website

- Are you including inside nofollow hyperlinks by way of a plugin that’s making use of this to all hyperlinks? Examine the hyperlink code in a browser by inspecting or viewing the supply code

- Use the identical small variety of anchor tags and hyperlinks in your website

repair these errors

- For pages you are attempting to rank, discover current website content material (pages and posts) that may hyperlink to the web page you wish to enhance rating for, and add inside hyperlinks

- Use the crawl knowledge from Screaming Frog to establish alternatives for extra inside linking

- Don’t overcook the variety of hyperlinks and the key phrases used to hyperlink – make it pure and throughout the board

- Examine your nofollow hyperlink guidelines in any plugin you’re utilizing to handle linking

14. Errors with web page “on web page” markup

Title tags and metadata are among the most abused code on web sites and have been since Google has been crawling web sites. With this in thoughts, website homeowners have just about forgotten in regards to the relevance and significance of title tags and metadata.

discover these errors

- Use Yoast to see how properly your website titles and metadata work – crimson and amber imply extra work might be finished

- Key phrase stuffing the key phrase tag – are you utilizing key phrases in your key phrase tag that don’t seem within the web page content material?

- Use SEMRush and Screaming Frog to establish duplicate title tags or lacking title tags

repair these errors

- Use Yoast to see the way to rework the titles and metadata , particularly the meta description, which has undergone a little bit of a rebirth because of Google enhance of character depend. Meta description knowledge was once set to 255 characters, however now the typical size it’s displaying is over 300 characters – reap the benefits of this enhance

- Use SEMrush to establish and repair any lacking or duplicate web page title tags – be sure that each web page has a singular title tag and web page meta description

- Take away any non-specific key phrases from the meta key phrase tag

Bonus: Structured knowledge

With Google turning into extra subtle and providing site owners the power so as to add completely different markup knowledge to show in other places inside their websites, it’s straightforward to see how schema markup can get messy. From:

- Map knowledge

- Assessment knowledge

- Wealthy snippet knowledge

- Product knowledge

- E book Critiques

It’s straightforward to see how this may break an internet site or simply get missed as the main target is elsewhere. The proper schema markup knowledge can in impact assist you to dominate the onscreen aspect of a SERP.

discover these errors

- Use your GSC to establish what schema is being picked up by Google and the place the errors are. Search Look > Structured Information – If no snippets are discovered this implies the code is mistaken or it’s essential to add schema code

- Use your GSC to establish what schema is being picked up by Google and the place the errors are. Search Look > Wealthy Playing cards – If no Wealthy Playing cards are discovered this implies the code is mistaken or it’s essential to add schema code

- Take a look at your schema with Google’s own markup helper

repair these errors

- Establish what schema you wish to use in your web site, then discover a related plugin to help. All in One Schema plugin or RichSnippets Plugin can be utilized to handle and generate a schema.

- As soon as the code is constructed, take a look at with Google Markup helper under

- In case you aren’t utilizing WordPress, you will get a developer to construct this code for you. Google prefers the JSON-LD format so guarantee your developer is aware of this format

- Take a look at your schema with Google’s personal markup helper

Wrapping it up

As search engine algorithms proceed to advance, so does the necessity for technical Search engine optimisation.

In case your web site wants an audit, consulting or enhancements, contact us directly more help.