Crawl funds is a crucial web optimization idea for big web sites with millions of pages or medium-sized web sites with just a few thousand pages that change day by day.

An instance of a web site with tens of millions of pages could be eBay.com, and web sites with tens of hundreds of pages that replace often could be person evaluations and score web sites just like Gamespot.com.

There are such a lot of tasks and points an web optimization knowledgeable has to contemplate that crawling is usually placed on the again burner.

However crawl funds can and ought to be optimized.

On this article, you’ll be taught:

- The way to enhance your crawl funds alongside the best way.

- Go over the modifications to crawl funds as an idea within the final couple of years.

(Word: In case you have a web site with just some hundred pages, and pages usually are not listed, we advocate studying our article on frequent issues causing indexing problems, as it’s definitely not due to crawl funds.)

What Is Crawl Finances?

Crawl funds refers back to the variety of pages that search engine crawlers (i.e., spiders and bots) go to inside a sure timeframe.

There are specific issues that go into crawl funds, akin to a tentative balance between Googlebot’s makes an attempt to not overload your server and Google’s total need to crawl your area.

Crawl funds optimization is a collection of steps you may take to extend effectivity and the speed at which serps’ bots go to your pages.

Why Is Crawl Finances Optimization Necessary?

Crawling is step one to showing in search. With out being crawled, new pages and web page updates gained’t be added to look engine indexes.

The extra typically that crawlers go to your pages, the faster updates and new pages seem within the index. Consequently, your optimization efforts will take much less time to take maintain and begin affecting your rankings.

Google’s index incorporates hundreds of billions of pages and is rising every day. It prices serps to crawl every URL, and with the rising variety of web sites, they need to cut back computational and storage prices by reducing the crawl rate and indexation of URLs.

There may be additionally a rising urgency to scale back carbon emissions for local weather change, and Google has a long-term technique to enhance sustainability and reduce carbon emissions.

These priorities might make it troublesome for web sites to be crawled successfully sooner or later. Whereas crawl funds isn’t one thing it is advisable to fear about with small web sites with just a few hundred pages, useful resource administration turns into an essential subject for enormous web sites. Optimizing crawl funds means having Google crawl your web site by spending as few sources as potential.

So, let’s focus on how one can optimize your crawl funds in at present’s world.

1. Disallow Crawling Of Motion URLs In Robots.Txt

You could be shocked, however Google has confirmed that disallowing URLs will not affect your crawl budget. This means Google will nonetheless crawl your web site on the similar fee. So why can we focus on it right here?

Properly, in case you disallow URLs that aren’t essential, you principally inform Google to crawl helpful components of your web site at the next fee.

For instance, in case your web site has an inner search function with question parameters like /?q=google, Google will crawl these URLs if they’re linked from someplace.

Equally, in an e-commerce web site, you may need aspect filters producing URLs like /?shade=crimson&measurement=s.

These question string parameters can create an infinite variety of distinctive URL mixtures that Google could attempt to crawl.

These URLs principally don’t have distinctive content material and simply filter the info you might have, which is nice for person expertise however not for Googlebot.

Permitting Google to crawl these URLs wastes crawl funds and impacts your web site’s total crawlability. By blocking them through robots.txt guidelines, Google will focus its crawl efforts on extra helpful pages in your web site.

Right here is the way to block inner search, aspects, or any URLs containing question strings through robots.txt:

Disallow: *?*s=*

Disallow: *?*shade=*

Disallow: *?*measurement=*

Every rule disallows any URL containing the respective question parameter, no matter different parameters that could be current.

- * (asterisk) matches any sequence of characters (together with none).

- ? (Query Mark): Signifies the start of a question string.

- =*: Matches the = signal and any subsequent characters.

This strategy helps keep away from redundancy and ensures that URLs with these particular question parameters are blocked from being crawled by serps.

Word, nevertheless, that this technique ensures any URLs containing the indicated characters shall be disallowed regardless of the place the characters seem. This may result in unintended disallows. For instance, question parameters containing a single character will disallow any URLs containing that character no matter the place it seems. For those who disallow ‘s’, URLs containing ‘/?pages=2’ shall be blocked as a result of *?*s= matches additionally ‘?pages=’. If you wish to disallow URLs with a particular single character, you should utilize a mix of guidelines:

Disallow: *?s=*

Disallow: *&s=*The important change is that there isn’t any asterisk ‘*’ between the ‘?’ and ‘s’ characters. This technique lets you disallow particular actual ‘s’ parameters in URLs, however you’ll want so as to add every variation individually.

Apply these guidelines to your particular use instances for any URLs that don’t present distinctive content material. For instance, in case you might have wishlist buttons with “?add_to_wishlist=1” URLs, it is advisable to disallow them by the rule:

Disallow: /*?*add_to_wishlist=*This can be a no-brainer and a pure first and most essential step recommended by Google.

An instance beneath reveals how blocking these parameters helped to scale back the crawling of pages with question strings. Google was attempting to crawl tens of hundreds of URLs with totally different parameter values that didn’t make sense, resulting in non-existent pages.

Nevertheless, generally disallowed URLs may nonetheless be crawled and listed by serps. This may occasionally appear unusual, nevertheless it isn’t typically trigger for alarm. It normally signifies that different web sites hyperlink to these URLs.

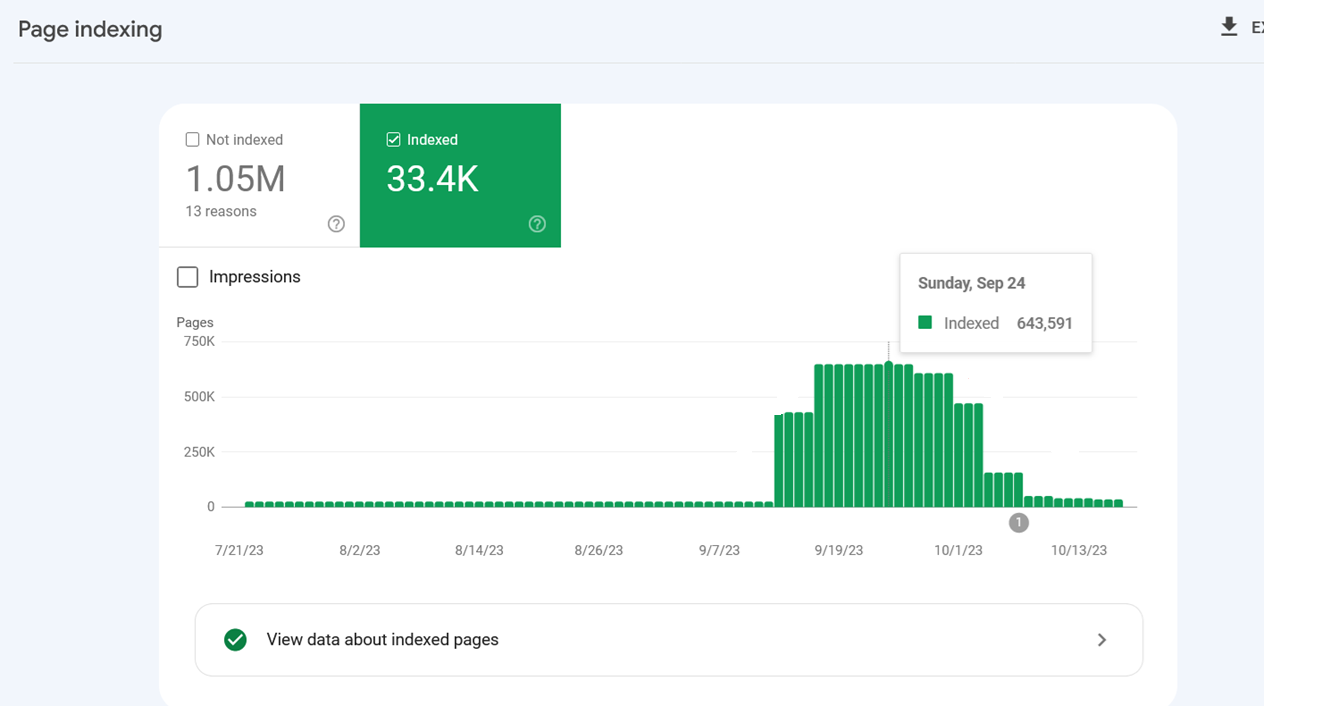

Indexing spiked as a result of Google listed inner search URLs after they have been blocked through robots.txt.

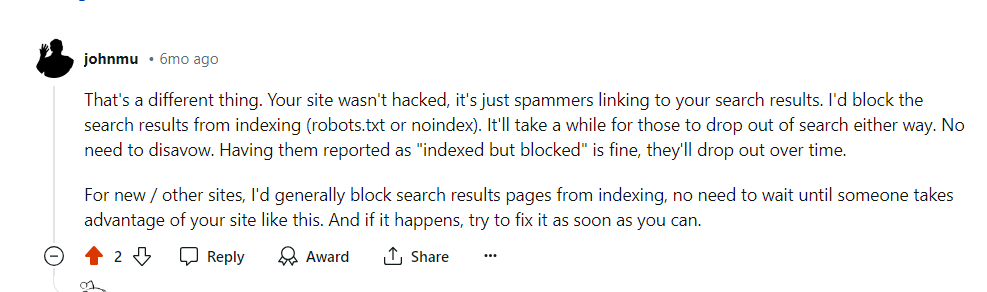

Indexing spiked as a result of Google listed inner search URLs after they have been blocked through robots.txt.Google confirmed that the crawling exercise will drop over time in these instances.

Google’s touch upon Reddit, July 2024

Google’s touch upon Reddit, July 2024One other essential advantage of blocking these URLs through robots.txt is saving your server sources. When a URL incorporates parameters that point out the presence of dynamic content material, requests will go to the server instead of the cache. This will increase the load in your server with each web page crawled.

Please bear in mind to not use “noindex meta tag” for blocking since Googlebot has to carry out a request to see the meta tag or HTTP response code, losing crawl funds.

1.2. Disallow Unimportant Useful resource URLs In Robots.txt

Moreover disallowing motion URLs, you could need to disallow JavaScript information that aren’t a part of the web site structure or rendering.

For instance, you probably have JavaScript information liable for opening photos in a popup when customers click on, you may disallow them in robots.txt so Google doesn’t waste funds crawling them.

Right here is an instance of the disallow rule of JavaScript file:

Disallow: /property/js/popup.js

Nevertheless, it’s best to by no means disallow sources which can be a part of rendering. For instance, in case your content material is dynamically loaded through JavaScript, Google must crawl the JS information to index the content material they load.

One other instance is REST API endpoints for kind submissions. Say you might have a kind with motion URL “/rest-api/form-submissions/”.

Doubtlessly, Google could crawl them. These URLs are under no circumstances associated to rendering, and it will be good apply to dam them.

Disallow: /rest-api/form-submissions/

Nevertheless, headless CMSs typically use REST APIs to load content material dynamically, so be sure you don’t block these endpoints.

In a nutshell, take a look at no matter isn’t associated to rendering and block them.

2. Watch Out For Redirect Chains

Redirect chains happen when a number of URLs redirect to different URLs that additionally redirect. If this goes on for too lengthy, crawlers could abandon the chain earlier than reaching the ultimate vacation spot.

URL 1 redirects to URL 2, which directs to URL 3, and so forth. Chains may take the type of infinite loops when URLs redirect to at least one one other.

Avoiding these is a commonsense strategy to web site well being.

Ideally, you’d be capable of keep away from having even a single redirect chain in your complete area.

However it could be an unattainable activity for a big web site – 301 and 302 redirects are certain to look, and you may’t repair redirects from inbound backlinks merely since you don’t have management over exterior web sites.

One or two redirects right here and there may not harm a lot, however lengthy chains and loops can grow to be problematic.

With a purpose to troubleshoot redirect chains you should utilize one of many web optimization instruments like Screaming Frog, Lumar, or Oncrawl to search out chains.

Whenever you uncover a series, one of the best ways to repair it’s to take away all of the URLs between the primary web page and the ultimate web page. In case you have a series that passes by way of seven pages, then redirect the primary URL on to the seventh.

One other nice option to cut back redirect chains is to exchange inner URLs that redirect with remaining locations in your CMS.

Relying in your CMS, there could also be totally different options in place; for instance, you should utilize this plugin for WordPress. In case you have a distinct CMS, you could want to make use of a customized resolution or ask your dev crew to do it.

3. Use Server Aspect Rendering (HTML) At any time when Doable

Now, if we’re speaking about Google, its crawler makes use of the most recent model of Chrome and is ready to see content loaded by JavaScript simply advantageous.

However let’s assume critically. What does that imply? Googlebot crawls a web page and sources akin to JavaScript then spends more computational resources to render them.

Keep in mind, computational prices are essential for Google, and it needs to scale back them as a lot as potential.

So why render content material through JavaScript (client side) and add further computational price for Google to crawl your pages?

Due to that, at any time when potential, it’s best to persist with HTML.

That approach, you’re not hurting your possibilities with any crawler.

4. Enhance Web page Pace

As we mentioned above, Googlebot crawls and renders pages with JavaScript, which implies if it spends fewer sources to render webpages, the better it is going to be for it to crawl, which depends upon how effectively optimized your website speed is.

Google says:

Google’s crawling is proscribed by bandwidth, time, and availability of Googlebot situations. In case your server responds to requests faster, we’d be capable of crawl extra pages in your web site.

So utilizing server-side rendering is already a fantastic step in direction of bettering web page velocity, however it is advisable to ensure that your Core Web Vital metrics are optimized, particularly server response time.

5. Take Care of Your Inside Hyperlinks

Google crawls URLs which can be on the web page, and all the time remember that totally different URLs are counted by crawlers as separate pages.

In case you have a web site with the ‘www’ model, ensure that your inner URLs, particularly on navigation, level to the canonical version, i.e. with the ‘www’ model and vice versa.

One other frequent mistake is lacking a trailing slash. In case your URLs have a trailing slash on the finish, ensure that your inner URLs even have it.

In any other case, pointless redirects, for instance, “https://www.instance.com/sample-page” to “https://www.instance.com/sample-page/” will lead to two crawls per URL.

One other essential side is to keep away from broken internal links pages, which may eat your crawl funds and soft 404 pages.

And if that wasn’t dangerous sufficient, in addition they harm your person expertise!

On this case, once more, I’m in favor of utilizing a instrument for web site audit.

WebSite Auditor, Screaming Frog, Lumar or Oncrawl, and SE Rating are examples of nice tools for a website audit.

6. Replace Your Sitemap

As soon as once more, it’s an actual win-win to deal with your XML sitemap.

The bots could have a a lot better and simpler time understanding the place the inner hyperlinks lead.

Use solely the URLs which can be canonical to your sitemap.

Additionally, guarantee that it corresponds to the most recent uploaded model of robots.txt and masses quick.

7. Implement 304 Standing Code

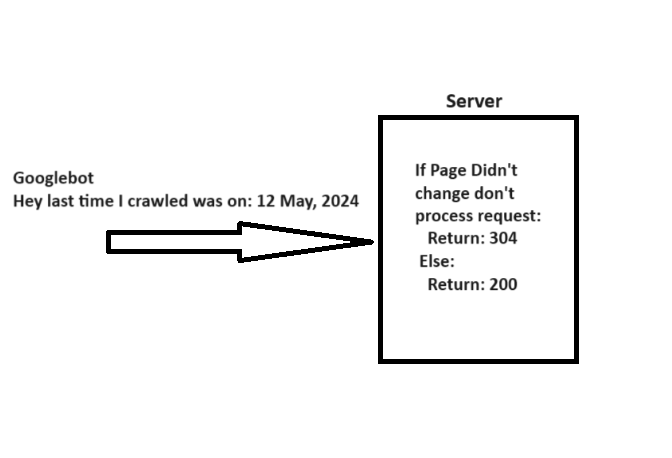

When crawling a URL, Googlebot sends a date through the “If-Modified-Since” header, which is further details about the final time it crawled the given URL.

In case your webpage hasn’t modified since then (laid out in “If-Modified-Since“), you could return the “304 Not Modified” status code with no response physique. This tells serps that webpage content material didn’t change, and Googlebot can use the model from the final go to it has on the file.

A easy clarification of how 304 not modified http standing code works.

A easy clarification of how 304 not modified http standing code works.Think about what number of server sources it can save you whereas serving to Googlebot save sources when you might have tens of millions of webpages. Fairly massive, isn’t it?

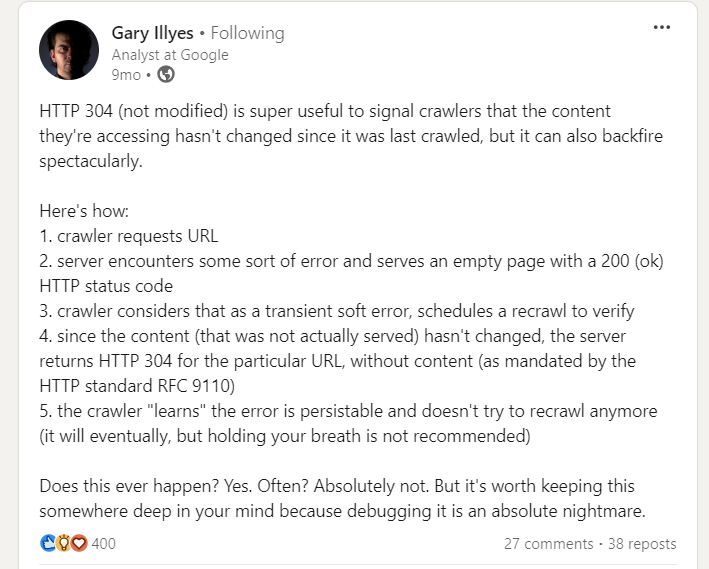

Nevertheless, there’s a caveat when implementing 304 standing code, pointed out by Gary Illyes.

Gary Illes on LinkedIn

Gary Illes on LinkedInSo be cautious. Server errors serving empty pages with a 200 standing could cause crawlers to cease recrawling, resulting in long-lasting indexing points.

8. Hreflang Tags Are Important

With a purpose to analyze your localized pages, crawlers make use of hreflang tags. You have to be telling Google about localized variations of your pages as clearly as potential.

First off, use the lang_code" href="https://www.searchenginejournal.com/technical-seo/tips-to-optimize-crawl-budget-for-seo/url_of_page" /> in your web page’s header. The place “lang_code” is a code for a supported language.

It’s best to use the

Learn: 6 Common Hreflang Tag Mistakes Sabotaging Your International SEO

9. Monitoring and Upkeep

Test your server logs and Google Search Console’s Crawl Stats report to watch crawl anomalies and establish potential issues.

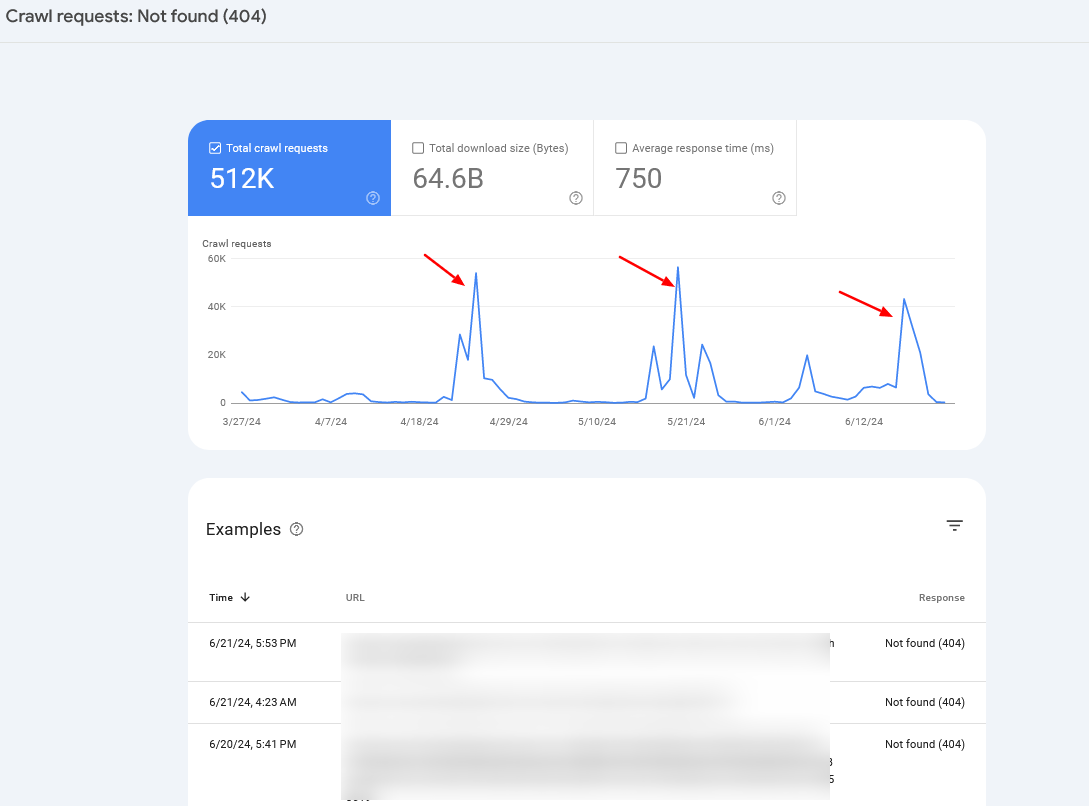

For those who discover periodic crawl spikes of 404 pages, in 99% of instances, it’s attributable to infinite crawl spaces, which we have now mentioned above, or signifies other problems your web site could also be experiencing.

Crawl fee spikes

Crawl fee spikesTypically, you could need to mix server log info with Search Console information to establish the foundation trigger.

Abstract

So, in case you have been questioning whether or not crawl funds optimization remains to be essential to your web site, the reply is clearly sure.

Crawl funds is, was, and doubtless shall be an essential factor to bear in mind for each web optimization skilled.

Hopefully, the following pointers will assist you optimize your crawl funds and enhance your web optimization efficiency – however bear in mind, getting your pages crawled doesn’t imply they are going to be listed.

In case you face indexation points, I recommend studying the next articles:

Featured Picture: BestForBest/Shutterstock

All screenshots taken by writer