In case you are an search engine marketing practitioner or digital marketer studying this text, you might have experimented with AI and chatbots in your everyday work.

However the query is, how will you take advantage of out of AI apart from utilizing a chatbot consumer interface?

For that, you want a profound understanding of how massive language fashions (LLMs) work and study the essential stage of coding. And sure, coding is completely essential to succeed as an search engine marketing skilled these days.

That is the primary of a series of articles that goal to stage up your expertise so you can begin utilizing LLMs to scale your search engine marketing duties. We imagine that sooner or later, this talent might be required for achievement.

We have to begin from the fundamentals. It should embrace important data, so later on this sequence, it is possible for you to to make use of LLMs to scale your search engine marketing or advertising and marketing efforts for essentially the most tedious duties.

Opposite to different comparable articles you’ve learn, we’ll begin right here from the tip. The video beneath illustrates what it is possible for you to to do after studying all of the articles within the sequence on the right way to use LLMs for search engine marketing.

Our crew makes use of this instrument to make inner linking sooner whereas sustaining human oversight.

Did you prefer it? That is what it is possible for you to to construct your self very quickly.

Now, let’s begin with the fundamentals and equip you with the required background data in LLMs.

What Are Vectors?

In arithmetic, vectors are objects described by an ordered listing of numbers (elements) equivalent to the coordinates within the vector house.

A easy instance of a vector is a vector in two-dimensional house, which is represented by (x,y) coordinates as illustrated beneath.

On this case, the coordinate x=13 represents the size of the vector’s projection on the X-axis, and y=8 represents the size of the vector’s projection on the Y-axis.

Vectors which can be outlined with coordinates have a size, which known as the magnitude of a vector or norm. For our two-dimensional simplified case, it’s calculated by the method:

Nonetheless, mathematicians went forward and outlined vectors with an arbitrary variety of summary coordinates (X1, X2, X3 … Xn), which known as an “N-dimensional” vector.

Within the case of a vector in three-dimensional house, that will be three numbers (x,y,z), which we will nonetheless interpret and perceive, however something above that’s out of our creativeness, and the whole lot turns into an summary idea.

And right here is the place LLM embeddings come into play.

What Is Textual content Embedding?

Textual content embeddings are a subset of LLM embeddings, that are summary high-dimensional vectors representing textual content that seize semantic contexts and relationships between phrases.

In LLM jargon, “phrases” are known as knowledge tokens, with every phrase being a token. Extra abstractly, embeddings are numerical representations of these tokens, encoding relationships between any knowledge tokens (items of knowledge), the place a knowledge token might be a picture, sound recording, textual content, or video body.

As a way to calculate how shut phrases are semantically, we have to convert them into numbers. Identical to you subtract numbers (e.g., 10-6=4) and you may inform that the space between 10 and 6 is 4 factors, it’s potential to subtract vectors and calculate how shut the 2 vectors are.

Thus, understanding vector distances is necessary as a way to grasp how LLMs work.

There are alternative ways to measure how shut vectors are:

- Euclidean distance.

- Cosine similarity or distance.

- Jaccard similarity.

- Manhattan distance.

Every has its personal use circumstances, however we’ll focus on solely generally used cosine and Euclidean distances.

What Is The Cosine Similarity?

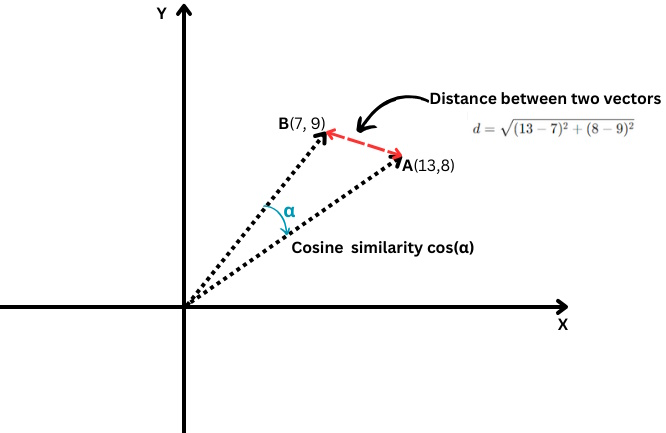

It measures the cosine of the angle between two vectors, i.e., how carefully these two vectors are aligned with one another.

Euclidean distance vs. cosine similarity

Euclidean distance vs. cosine similarityIt’s outlined as follows:

The place the dot product of two vectors is split by the product of their magnitudes, a.ok.a. lengths.

Its values vary from -1, which suggests fully reverse, to 1, which suggests similar. A worth of ‘0’ means the vectors are perpendicular.

When it comes to textual content embeddings, reaching the precise cosine similarity worth of -1 is unlikely, however listed below are examples of texts with 0 or 1 cosine similarities.

Cosine Similarity = 1 (Equivalent)

- “Prime 10 Hidden Gems for Solo Vacationers in San Francisco”

- “Prime 10 Hidden Gems for Solo Vacationers in San Francisco”

These texts are similar, so their embeddings can be the identical, leading to a cosine similarity of 1.

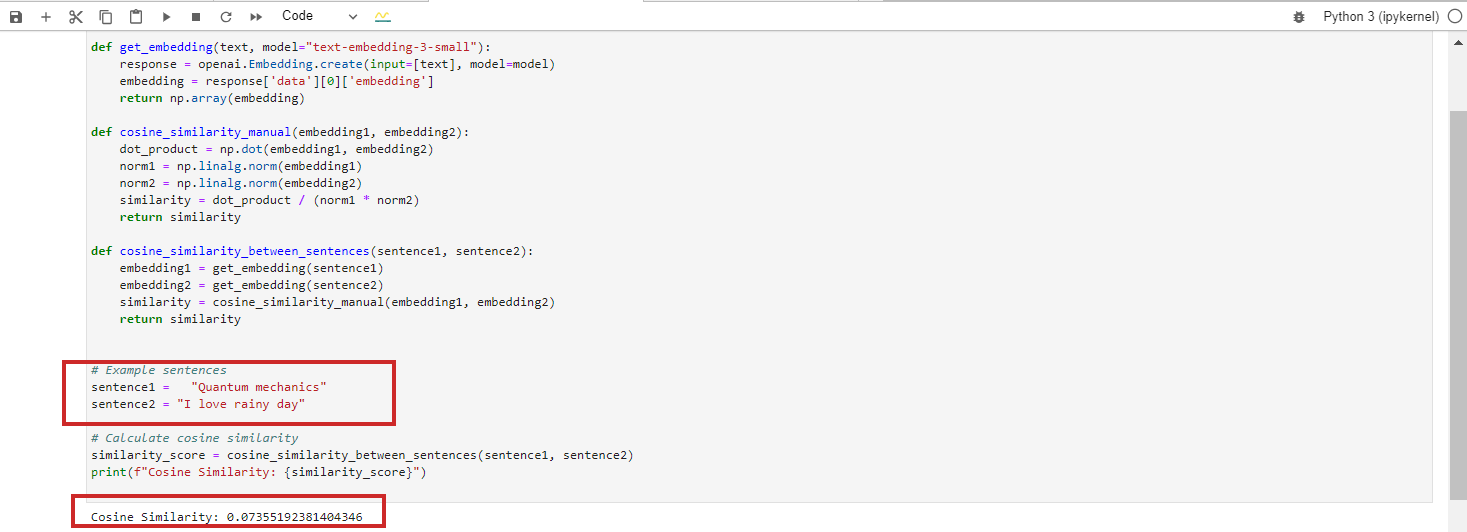

Cosine Similarity = 0 (Perpendicular, Which Means Unrelated)

- “Quantum mechanics”

- “I like wet day”

These texts are completely unrelated, leading to a cosine similarity of 0 between their BERT embeddings.

Nonetheless, for those who run Google Vertex AI’s embedding mannequin ‘text-embedding-preview-0409’, you’re going to get 0.3. With OpenAi’s ‘text-embedding-3-large’ fashions, you’re going to get 0.017.

(Notice: We are going to study within the subsequent chapters intimately practising with embeddings utilizing Python and Jupyter).

We’re skipping the case with cosine similarity = -1 as a result of it’s extremely unlikely to occur.

If you happen to attempt to get cosine similarity for textual content with reverse meanings like “love” vs. “hate” or “the profitable venture” vs. “the failing venture,” you’re going to get 0.5-0.6 cosine similarity with Google Vertex AI’s ‘text-embedding-preview-0409’ mannequin.

It’s as a result of the phrases “love” and “hate” usually seem in comparable contexts associated to feelings, and “profitable” and “failing” are each associated to venture outcomes. The contexts by which they’re used would possibly overlap considerably within the coaching knowledge.

Cosine similarity can be utilized for the next search engine marketing duties:

- Classification.

- Keyword clustering.

- Implementing redirects.

- Internal linking.

- Duplicate content material detection.

- Content material advice.

- Competitor analysis.

Cosine similarity focuses on the path of the vectors (the angle between them) fairly than their magnitude (size). In consequence, it will probably seize semantic similarity and decide how carefully two items of content material align, even when one is for much longer or makes use of extra phrases than the opposite.

Deep diving and exploring every of those might be a aim of upcoming articles we’ll publish.

What Is The Euclidean Distance?

In case you’ve gotten two vectors A(X1,Y1) and B(X2,Y2), the Euclidean distance is calculated by the next method:

It’s like utilizing a ruler to measure the space between two factors (the crimson line within the chart above).

Euclidean distance can be utilized for the next search engine marketing duties:

- Evaluating key phrase density within the content material.

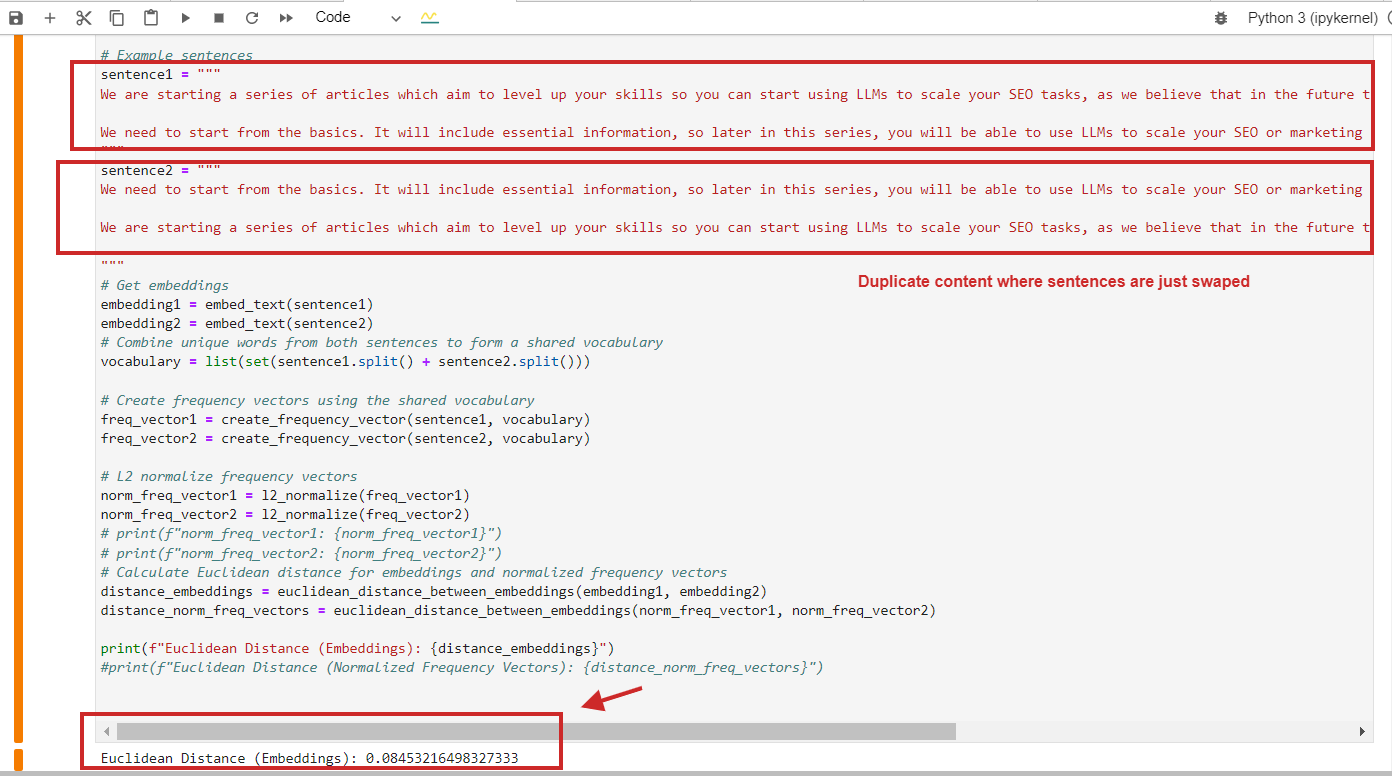

- Finding duplicate content with an identical construction.

- Analyzing anchor textual content distribution.

- Key phrase clustering.

Right here is an instance of Euclidean distance calculation with a price of 0.08, almost near 0, for duplicate content material the place paragraphs are simply swapped – which means the space is 0, i.e., the content material we examine is identical.

Euclidean distance calculation instance of duplicate content material

Euclidean distance calculation instance of duplicate content materialAfter all, you should use cosine similarity, and it’ll detect duplicate content material with cosine similarity 0.9 out of 1 (virtually similar).

Here’s a key level to recollect: You shouldn’t merely depend on cosine similarity however use different strategies, too, as Netflix’s research paper means that utilizing cosine similarity can result in meaningless “similarities.”

We present that cosine similarity of the realized embeddings can in truth yield arbitrary outcomes. We discover that the underlying cause shouldn’t be cosine similarity itself, however the truth that the realized embeddings have a level of freedom that may render arbitrary cosine-similarities.

As an search engine marketing skilled, you don’t want to have the ability to totally comprehend that paper, however keep in mind that analysis exhibits that different distance strategies, such because the Euclidean, must be thought of primarily based on the venture wants and end result you get to cut back false-positive outcomes.

What Is L2 Normalization?

L2 normalization is a mathematical transformation utilized to vectors to make them unit vectors with a size of 1.

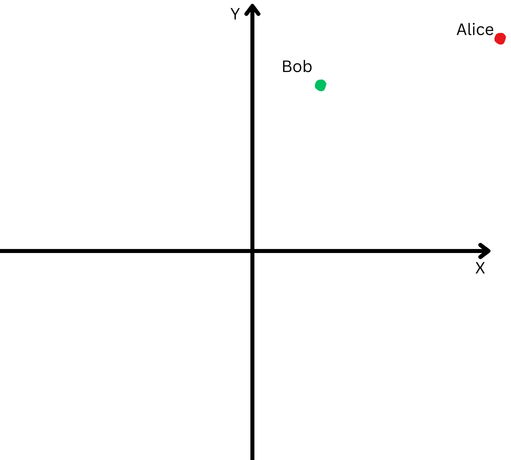

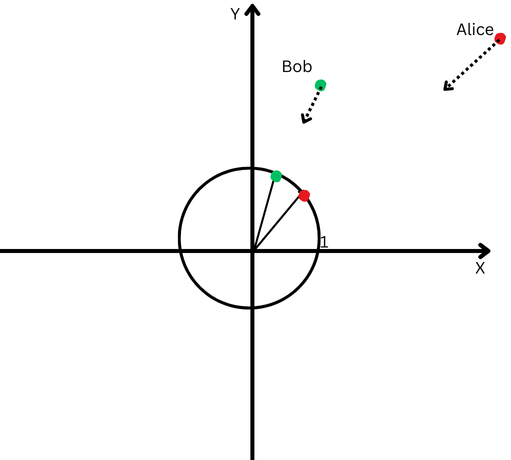

To clarify in easy phrases, let’s say Bob and Alice walked an extended distance. Now, we need to examine their instructions. Did they comply with comparable paths, or did they go in fully totally different instructions?

“Alice” is represented by a crimson dot within the higher proper quadrant, and “Bob” is represented by a inexperienced dot.

“Alice” is represented by a crimson dot within the higher proper quadrant, and “Bob” is represented by a inexperienced dot.Nonetheless, since they’re removed from their origin, we can have issue measuring the angle between their paths as a result of they’ve gone too far.

Alternatively, we will’t declare that if they’re removed from one another, it means their paths are totally different.

L2 normalization is like bringing each Alice and Bob again to the identical nearer distance from the place to begin, say one foot from the origin, to make it simpler to measure the angle between their paths.

Now, we see that though they’re far aside, their path instructions are fairly shut.

A Cartesian aircraft with a circle centered on the origin.

A Cartesian aircraft with a circle centered on the origin.Which means that we’ve eliminated the impact of their totally different path lengths (a.ok.a. vectors magnitude) and might focus purely on the path of their actions.

Within the context of textual content embeddings, this normalization helps us deal with the semantic similarity between texts (the path of the vectors).

Many of the embedding fashions, similar to OpeanAI’s ‘text-embedding-3-large’ or Google Vertex AI’s ‘text-embedding-preview-0409’ fashions, return pre-normalized embeddings, which suggests you don’t must normalize.

However, for instance, BERT mannequin ‘bert-base-uncased’ embeddings usually are not pre-normalized.

Conclusion

This was the introductory chapter of our sequence of articles to familiarize you with the jargon of LLMs, which I hope made the data accessible while not having a PhD in arithmetic.

If you happen to nonetheless have hassle memorizing these, don’t fear. As we cowl the subsequent sections, we’ll check with the definitions outlined right here, and it is possible for you to to grasp them by means of observe.

The subsequent chapters might be much more fascinating:

- Introduction To OpenAI’s Textual content Embeddings With Examples.

- Introduction To Google’s Vertex AI Textual content Embeddings With Examples.

- Introduction To Vector Databases.

- How To Use LLM Embeddings For Inside Linking.

- How To Use LLM Embeddings For Implementing Redirects At Scale.

- Placing It All Collectively: LLMs-Primarily based WordPress Plugin For Inside Linking.

The aim is to level up your skills and put together you to face challenges in search engine marketing.

A lot of you might say that there are instruments you should purchase that do all these issues routinely, however these instruments won’t be able to carry out many particular duties primarily based in your venture wants, which require a customized method.

Utilizing SEO tools is all the time nice, however having expertise is even higher!

Extra assets:

Featured Picture: Krot_Studio/Shutterstock